2026-03-19

2026-03-19

0

0

By Esther Wong

The conversation that crystallized this thesis happened at a side table during a reception at GTC 2026, over mediocre pinot noir, when a Lenovo APAC executive told me that hardware orders for secondary compute, mid-range servers, edge devices, desktop AI rigs, were seeing an uptick in Southeast Asia and Greater China. Not so much by hyperscalers, but more mid-market companies and developers who wanted to run AI agents locally using open-source frameworks. The price and speed of inference and the onset of OpenClaw led to now it made economic sense to host your own. What happens when tokens become cheap enough to commoditize?

1. The OpenClaw Moment and GTC's Sleeper Announcement

At GTC Jensen started with Vera Rubin AI factory announcements 700 million tokens per second, a 3,500x throughput increase over two years. Impressive hardware. But the announcement that will reshape the industry, at least two me, isn’t silicon. 1) Its collaboration with Groq and impact to the inference market (see article link) and 2) it’s OpenClaw strategy.

Jensen Huang called “the most popular open source project in the history of humanity.” He compared it to HTML, Linux, and Kubernetes—infrastructure so foundational it becomes invisible. His exact words: “Every single company in the world needs an OpenClaw strategy.” NVIDIA’s enterprise play, NemoClaw, is built on top of it: a single-command install stack with agent sandboxing, policy engines, privacy routing, and support for hardware ranging from GeForce RTX PCs to DGX Spark to full DGX Stations. The creator, Peter Steinberger, joined OpenAI in February 2026; OpenClaw now sits under an independent foundation.

This is the infrastructure layer that makes the Lenovo anecdote make sense. When you have an open-source agent framework that runs on everything from a desktop GPU to a data center, the demand for secondary hardware explodes. And the token economics of that hardware depend on one variable: how cheap inference can get.

Jensen predicts SaaS is becoming GaaS—Agents-as-a-Service. Engineers will receive annual token budgets of $100K+ the way they once received AWS credits.

Jensen’s framing deserves attention. He’s predicting that SaaS will evolve into GaaS—Agents-as-a-Service—where software engineers receive annual token budgets of $100,000 or more, analogous to the cloud-credit era. If that’s even directionally correct, the total addressable market for token consumption dwarfs anything we’ve modeled in enterprise software. NVIDIA’s own projections suggest a 1,000,000x increase in AI compute demand over two years: 10,000x per task multiplied by 100x usage growth. That isn’t marketing hyperbole; Fireworks AI reported that LLMs were already processing 1.5 quadrillion tokens per month as of late 2025.

2. The Tokenomics of Agentic AI, The Flywheel In Place

There’s a fundamental discontinuity between human-driven token consumption and agent-driven token consumption that most models underappreciate. A human using ChatGPT or Claude might generate a few thousand tokens per session, a question, a response, maybe a follow-up. An AI agent executing a multi-step workflow…researching, drafting, validating, iterating, consumes 10 to 50x more tokens per task. Auth0 data from January 2026 shows that non-human identities already outnumber humans 50:1 in some enterprise environments. 70% of enterprises are running AI agents in production, according to Team8’s survey data.

The flywheel here is structurally different from anything we’ve seen in software. Agent workflows operate at machine speed: shorter feedback loops, faster iteration cycles, compounding usage patterns that don’t sleep or take weekends. When a human uses a SaaS product, the usage curve is bounded by attention and working hours. When an agent uses an API, the usage curve is bounded only by budget and latency.

Jason Calacanis put it memorably on the All-In podcast: a single Claude agent running at 10–20% capacity costs roughly USD 300 per day, or about USD 100,000 per year. “When do tokens outpace the employee salary?” That question isn’t rhetorical anymore…it’s a line item in CFO models. ByteDance’s Doubao platform alone was processing over 50 trillion tokens per day as of December 2025, with approximately 100m daily active users. Kimi’s K2.5 model processed 3.07 trillion tokens in its first seven days post-launch. These are consumption numbers that would have been science fiction eighteen months ago.

When an agent uses an API, the usage curve is bounded only by budget and latency.

3. Chinese LLMs – An Underappreciated Trade in AI?

Here is the core thesis, stated plainly: Chinese LLMs are 10–30x cheaper than their US equivalents on output tokens, and for the vast majority of agentic use cases, the quality delta is mostly irrelevant.

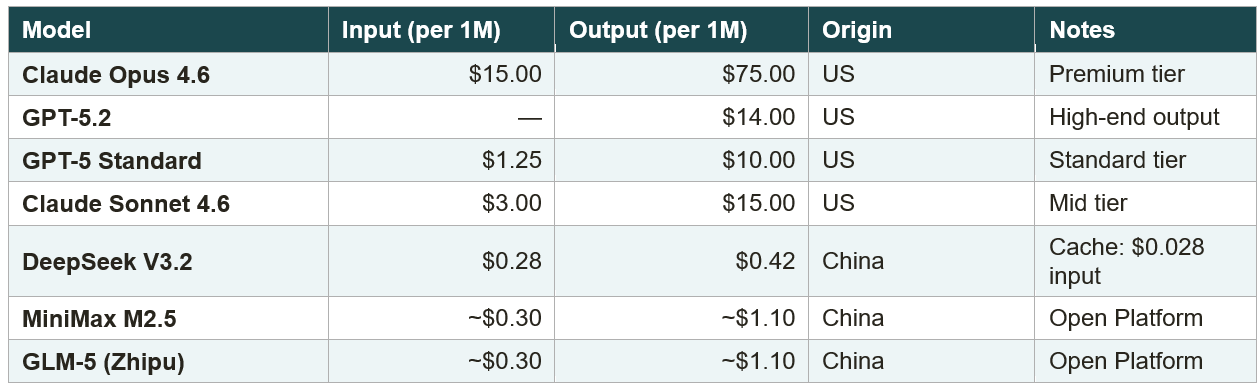

Table 1: Token Pricing Comparison, US vs. Chinese LLMs (March 2026)

Sources: Official API pricing pages; Robonomics AI; company disclosures (March 2026).

DeepSeek V3.2 charges USD 0.42 per million output tokens. GPT-5 Standard charges USD 10.00. Claude Opus 4.6 charges USD 75.00, it is a 24x gap at the low end and a 178x gap at the high end! With DeepSeek’s cache-hit pricing at USD 0.028 per million input tokens (a 90% discount), the economics become almost absurdly favourable for high-volume agent workloads that re-query similar contexts.

The counterargument is quality, and it’s wrong, or at least, it’s wrong for the use cases that will drive the majority of token volume. DeepSeek V3.2 Speciale outperformed GPT-5-High on AIME 2025, scoring 96.0% versus 94.6%. The architecture is elegant: a 685-billion-parameter MOE model that activates only 37 billion parameters per token, giving you the knowledge depth of a 685B model at the inference cost of a 37B one. This isn’t luck. As I always joke, you get the best engineers from the poor countries. China’s limited access to cutting-edge NVIDIA silicon forced a different optimization path.

I call this the “last 999” insight. For most production applications, customer service agents, code generation, document processing, workflow auto, but the 10-30x price gap. When the price gap is that big, the marginal quality advantage of a US frontier model is economically irrelevant for the vast majority of agent deployments. The consumer, or more precisely, the agent orchestration layer, will optimize for cost.

4. China’s AI Export – The OEM Thesis

In the 1990s, Hong Kong and mainland Chinese textile OEMs,Texwinca, FountainSet (Yes, I am that old!) manufactured a huge share of the world’s garments. Nobody knew their names, but their output was everywhere. Their clients are the Gaps, The Ralph Laurens, the H&Ms, Uniqlo of the world, but the OEMs captured the volume.

Cheap tokens are China’s true AI-age export—the 2026 equivalent of cheap textiles in the 1990s. Intelligence as commodity.

MiniMax, Zhipu, and DeepSeek are effectively the Texwinca and FountainSet of the AI era, operating as the OEM infrastructure for the global AI agent economy. Historically, a US-made t-shirt was arguably higher quality but cost three times as much to make, allowing the "good enough" Chinese counterpart to dominate global supply chains. Today, same thing is happening in AI, manifested through the cost of token.

This cost-to-performance arbitrage is exacerbated by the fact that AI infrastructure is completely decoupled from consumer brand equity. In AI 3.0, "brand" is being replaced by a strictly quantitative trust stack: model weights, confidence coefficients, latency benchmarks, and price. An agent orchestration layer programmatically choosing between API endpoints is entirely agnostic to the logo; it optimizes purely for speed, output quality, and unit economics. This also means that the “OEM” layer captures more value.

This structural shift is already visible in the fundamental metrics of leading Chinese AI infrastructure companies. Moonshot AI's Kimi is a prime example: following the release of its K2.5 model, global paying users quadrupled in a matter of days, and its overseas revenue has now officially surpassed its domestic revenue. Furthermore, the K2.5 model generated more revenue in its first 20 days post-launch than Moonshot earned in all of 2025. This rapid commercialization is reflected directly in its valuation trajectory, which has more than quadrupled from USD 4.3 billion in late 2025 to USD 18 billion as of March 2026.

Simultaneously, a massive consolidation is happening in the enterprise token consumption stack. According to Frost & Sullivan data for the second half of 2025, Chinese models now completely dominate domestic enterprise usage: Alibaba's Qwen holds 32.1%, ByteDance's Doubao holds 21.3%, and DeepSeek captures 18.4%. Together, these three capture over 70% of the market.

This represents China’s most important export since manufacturing itself. They are no longer just exporting hardware, apps, or platforms; they are exporting intelligence as a commodity. The global dependency on this infrastructure is already staggering - partners at a16z recently noted that 80% of the startups pitching to them are building on Chinese open-source models.

5. Valuation Scorecard

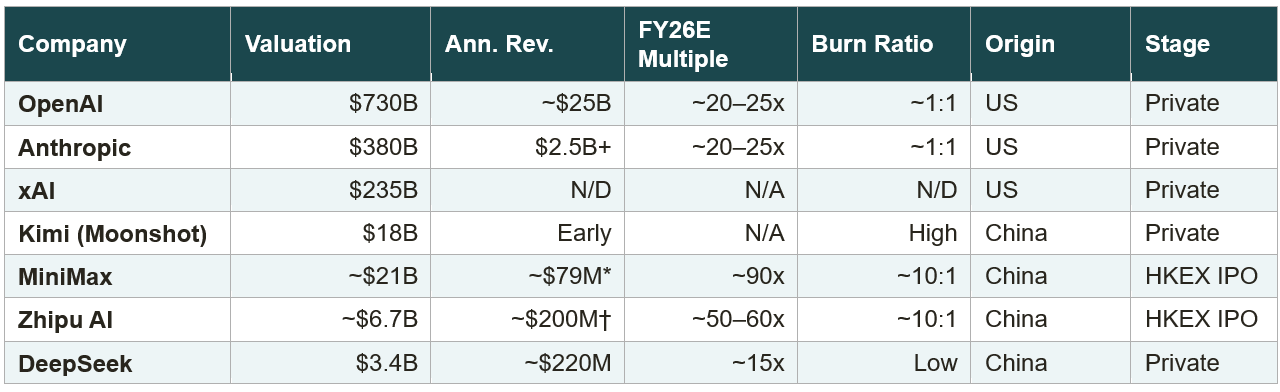

Table 2: LLM Valuation Scorecard, US vs. Chinese (March 2026)

MiniMax FY2025 total: AI-native product revenue $53.1M + Open Platform $26.0M. † Zhipu tracking to cross $200M in 2026. Sources: Bloomberg, TechNode, Robonomics AI, HKEX filings, company disclosures.

The paradox jumps off the page. Chinese LLMs are “cheap but expensive.” MiniMax trades at 90x 2026 PE. Zhipu trades at 50–60x. These are higher relative multiples than OpenAI and Anthropic at 20–25x, despite combined Chinese LLM industry revenue sitting below USD 500 million and burn ratios running 10:1. The market is pricing in explosive growth, but the growth has to actually materialize before the burn consumes the capital.

OpenAI raised USD 110 billion at a USD 730 billion pre-money valuation. It’s projecting USD 14 billion in losses for 2026 and cumulative losses of USD 115 billion through 2029. Anthropic raised USD 30 billion at a USD 380 billion post-money valuation, with Claude Code alone on a USD 2.5 billion+ run rate that doubled since January 2026. These are staggering numbers, but at least the revenue base is staggering too.

For the Chinese players, the question isn’t whether their tokens are cheap, that’s given. Rather, is whether they can monetize the flywheel before US players compress the price gap from above. The window may be two to three years. If agent-driven token consumption scales as fast as the early data suggests, the volume advantage could be decisive.

Where I see the asymmetric opportunity is not in the foundation models themselves, but in the infrastructure and OEM layer they enable. The companies building agent orchestration platforms, inference optimization middleware, and vertical applications on top of cheap Chinese tokens, those are the picks-and-shovels play of this cycle. The token providers may end up commoditized. The builders on top may capture the margin. It’s the textile analogy all the way through: the OEM makes the fabric, but the brand captures the value. Except this time, the fabric is intelligence, and the brand is whatever agent framework delivers the best user outcome at the lowest cost.

6. The AGI Trade

Good valued intelligence, running on advanced clouds and commoditized edge devices, orchestrated by open-source agents. That’s the trade. Chinese LLM providers are building the OEM layer for the agentic economy, whether the companies themselves capture the value, or whether the value migrates to the orchestration and application layers above them, is the billion-dollar question.

I don’t have certainty. But I know where I’m looking.