2026-03-17

2026-03-17

0

0

By Esther Wong

Jensen Validated the Cerebras Thesis — In His Own Words

Jensen Huang's GTC 2026 keynote was, in a lot of ways, the Cerebras story told from a different stage.

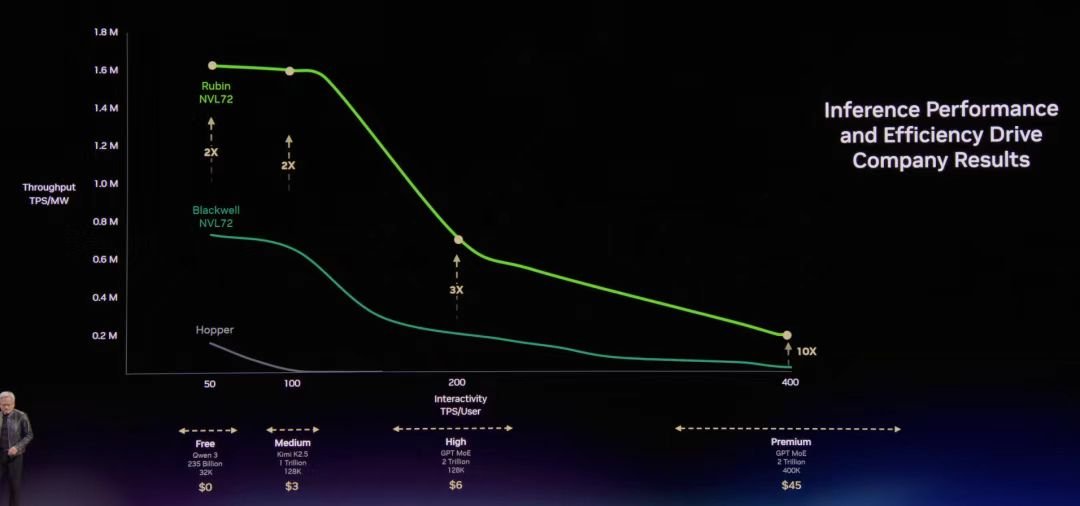

The big idea Jensen kept coming back to: how fast AI responds to you isn't just a nice-to-have , but it's what determines whether an AI product makes money. He introduced this concept of a speed curve and dropped a line that's going to get quoted a lot: "The most valuable tokens are the fast tokens." The basic intuition is that once AI goes from generating a few dozen words per second to hundreds or even thousands, entirely new kinds of applications become possible. Think agents that can reason through complex problems in real time without making you wait. The revenue opportunity isn't a straight line, it explodes once you're on the fast end of that curve. That's the exact bet Cerebras has been making since it started.

What was striking is what Jensen also admitted: Nvidia's own flagship GPU systems hit a ceiling at the high end of that speed curve. The bottleneck isn't raw computing power, it's how fast the chip can read data from memory. GPUs store their data in memory chips that sit off the main processor, and there's a physical limit to how fast information can travel between them. It's a bit like having an incredibly fast chef who still has to keep running to a refrigerator across the kitchen. Nvidia knows this. Jensen said it out loud.

The Groq Integration: What It Confirms and What It Concedes

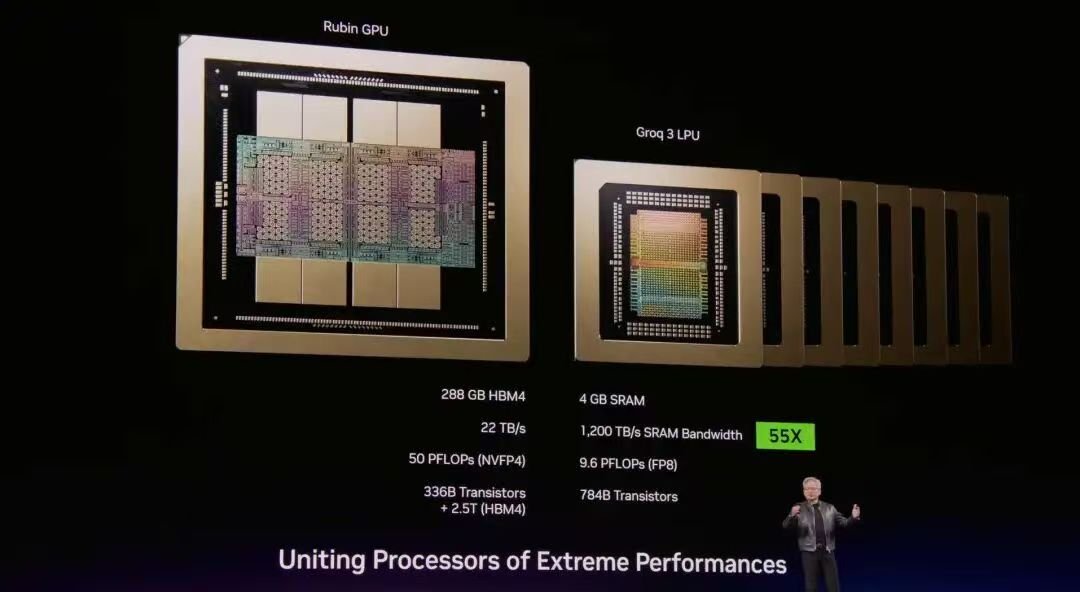

The biggest hardware announcement was a new combined system: Nvidia's Vera Rubin GPUs paired with Groq's LPU chips, all managed by Nvidia's software stack. It's a deliberate division of labor - the GPU handles the "reading in/pre-fill" phase (digesting a long prompt or document, which is computationally intensive), while the Groq chips handle the "writing out/decode" phase (generating the actual response word by word, which demands ultra-fast memory access).

The reason for this split is that the two tasks are fundamentally different. Generating each new word in a response requires loading the entire AI model's memory for every single step. If that memory is stored far from the processor, there's an unavoidable delay that compounds with every word. Groq's chips solve this by storing memory on the chip itself — much closer, much faster.

The new Groq chip doubles the on-chip memory versus the original and delivers impressive speed gains. Jensen claimed the combined system is 35x more efficient per unit of electricity than the prior generation, and could unlock 10x more revenue potential for the largest AI models. Big numbers — but they need context.

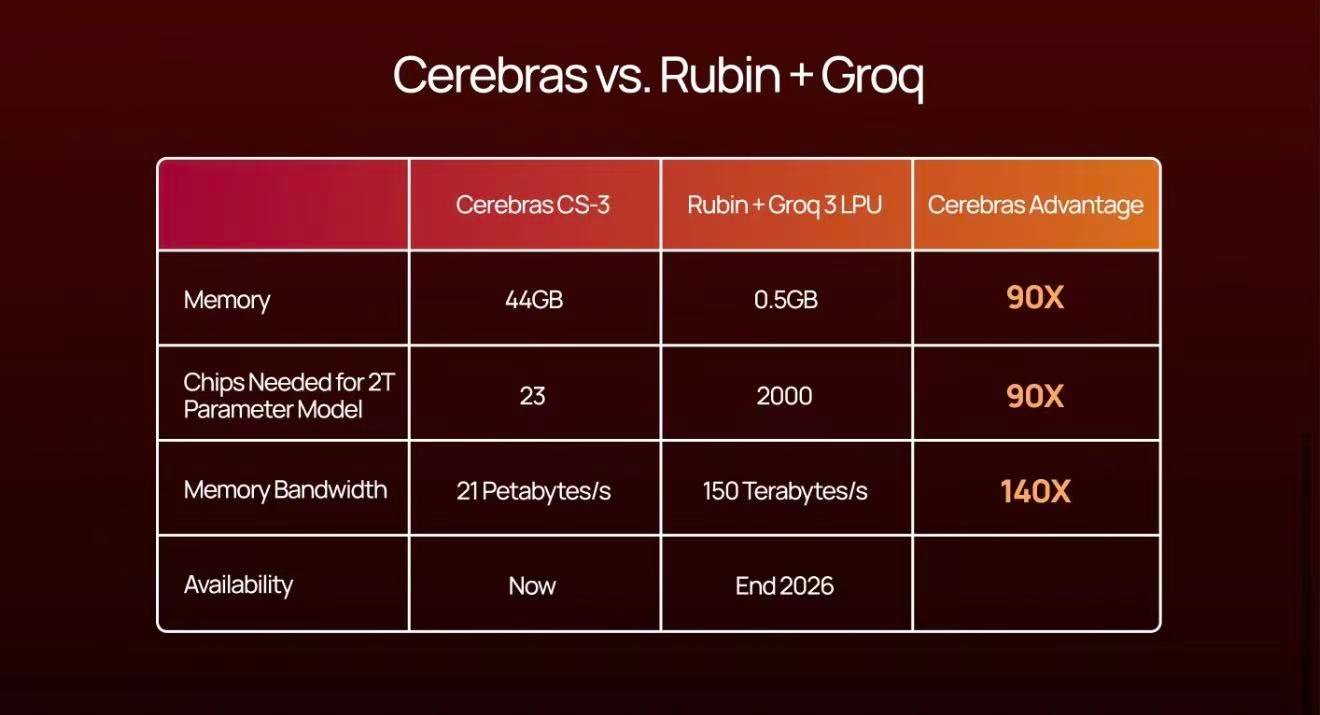

Because when you actually look at the chip specs, you start to understand why Cerebras is in a different category entirely.

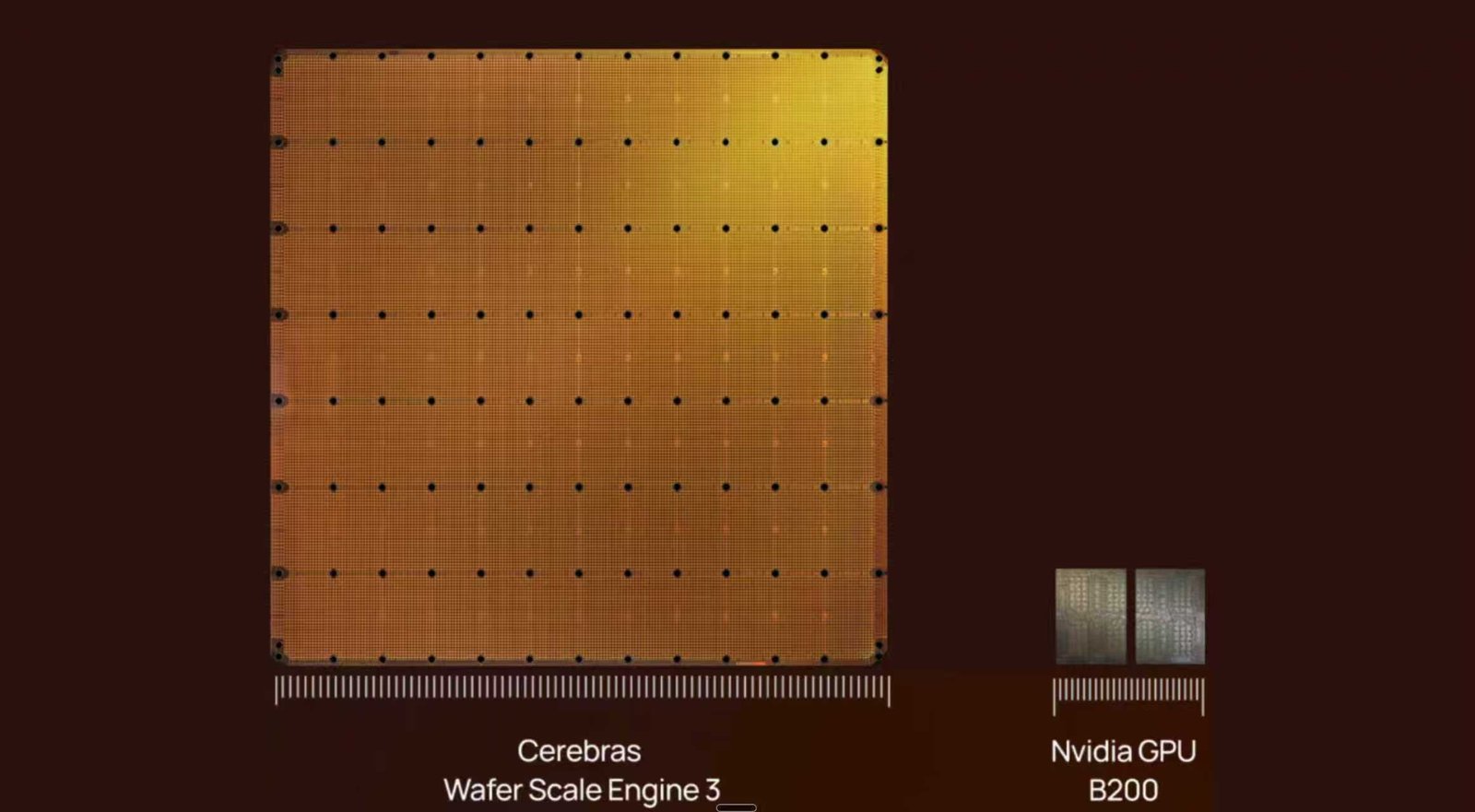

Where Cerebras Stands — and Why Wafer Scale Is Different

Here's a useful way to think about the memory comparison. Each Groq chip holds about 500 MB of fast on-chip memory. Each Cerebras chip — which is actually an entire silicon wafer, the size of a dinner plate, holds 44 GB. That's roughly 88x more memory on a single device.

Why does this matter? Today's largest AI models are enormous. A cutting-edge 2-trillion-parameter model takes up roughly 2 terabytes of storage. To run that model at real speed on Groq chips, you'd need around 2,000 individual chips all working in sync. To run it on Cerebras, you'd need about 23 WSE. Fewer devices means fewer connections between them, and fewer connections means less lag, less complexity, and less that can go wrong.

When you're doing inference, every millisecond counts. Synchronizing thousands of chips for every single word output creates a coordination problem that compounds at scale. Cerebras largely sidesteps that problem because most of the communication stays within a single piece of silicon, where it's orders of magnitude faster.

Disaggregated Inference: Smart Architecture, But Not the Whole Story

The split between "reading in" and "writing out" that Nvidia is building around isn't just a marketing angle but instead, it reflects a real understanding of what AI inference actually needs. Different stages of generating a response have genuinely different demands, and Nvidia is now engineering its systems to match.

Nvidia takes this even further inside the system, splitting operations at an even finer level between the GPU and the Groq chip. It's clever engineering that squeezes complementary strengths from each component.

It's also worth noting that Amazon Web Services has a competing chip called Trainium 3, built on a cutting-edge manufacturing process, positioned as a high-efficiency option for both training and inference. Nvidia has even opened up its own connectivity technology to allow these third-party chips to plug in. The broader takeaway: the idea of using specialized chips for different tasks is no longer a quirky alternative to the GPU monoculture, it's becoming the standard.

Speed at the Frontier: How Cerebras Compares

To be precise: on models up to about 70 billion parameters, which covers many of today's most widely used AI systems, Cerebras systems already exceed 1,000 tokens per second per user. Jensen identified 400–600 tokens per second as the practical ceiling for Nvidia's flagship GPU cluster on interactive tasks. Cerebras is well above that.

As models get larger, the advantage compounds. In systems like Groq's, adding more chips to handle bigger models also multiplies the coordination overhead. In Cerebras's architecture, adding more systems to handle bigger models is relatively clean, only the thin layer of communication between systems matters, while everything inside each wafer remains local and fast.

What Jensen's Forecast Means for the Market

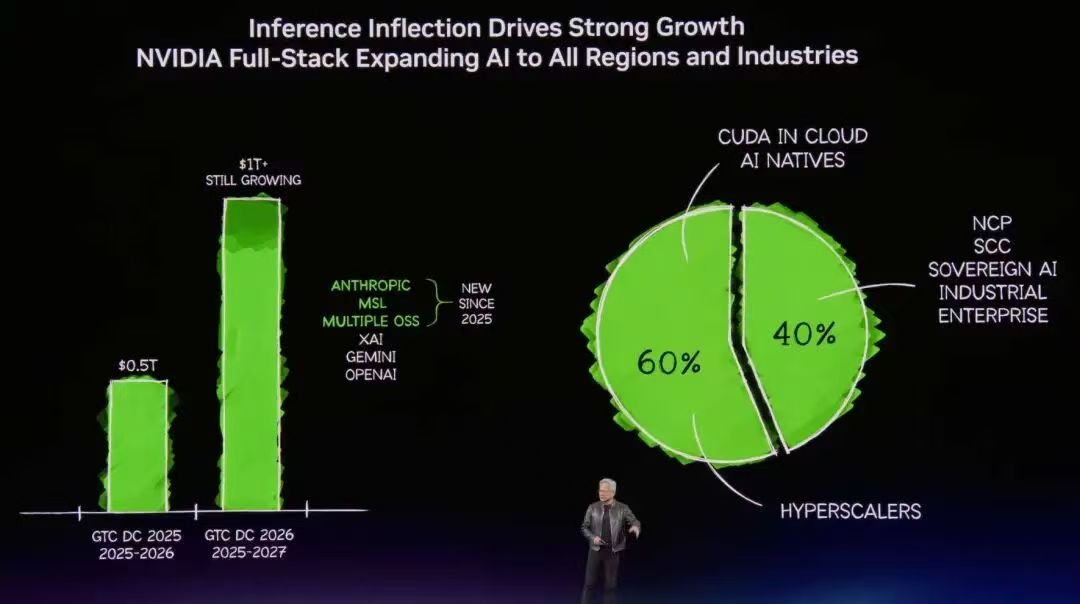

Jensen projected USD 1 trillion in purchase orders for Nvidia's next-generation systems through 2027 which actually double his prior estimate, driven by surge in inference demand. OpenAI alone is reportedly planning 250 megawatts of dedicated inference capacity in 2026 with Cerebras, and as AI models get more capable, the amount of compute needed per interaction keeps growing, and the economics increasingly favour speed.

The market Jensen is describing, fast, interactive, low-latency AI for the biggest and most capable models, is precisely the market Cerebras was designed for. The fact that Nvidia's most sophisticated inference product now uses on-chip memory as its critical component is the clearest possible signal that this architectural approach isn't a niche bet. It's the direction the industry is moving.

The question for the next 18 months isn't whether fast, on-chip memory wins, but which architecture handles the largest, most complex models most efficiently as the frontier keeps pushing outward. Cerebras's answer to that question hasn't changed. GTC 2026 just made a lot more people start asking it and seeing clearer.